Stop Faking It Till You Make It: Increase LLM Accuracy with RAG

Retrieval-augmented generation bridges the gap between LLMs and your private knowledge base. In this post, we’ll introduce RAG and discuss how to know if it’s the right strategy for your AI app.

“Sophisticated bullshit artists” is how François Chollet, creator of Keras, described LLMs. They’re confident, eloquent, and prone to making completely unsubstantiated claims. Still, François and many others are bullish.

Why the optimism? Because, LLMs have the potential to be the ultimate digital assistant, among much more. But first, they’ll have to properly cite their sources and admit when they don’t know the answer. That’s possible with retrieval-augmented generation (RAG), the subject of this post, and the key to building trust in your LLM.

When should you use RAG?

There’s a reason we’re starting with when to use RAG and not what RAG is. Product development, whether specifically for AI or not, is about solving users’ problems. Sometimes, RAG is overkill and an out-of-the-box LLM will perform just fine. So what user problems does RAG solve?

There are a few, and they largely stem from the limitations of how LLMs are trained:

- Accuracy and explainability: Users need to fact-check LLMs. Even when they cite sources, there’s no guarantee that those sources are real.

- Data access: LLMs don’t have access to your private data, so they can’t leverage that in responding to users.

- Data freshness: LLMs are not always up to date. For instance, GPT-3.5’s training data cuts off at September 2021.

Other techniques like prompt engineering and model fine-tuning don’t solve quite the same problems as RAG. Because of context window limits, prompts can only include so much additional information—not hundreds of pages of company PDFs. Meanwhile, fine-tuning is expensive and would be hard to keep up to date if you need your LLM to access your latest records.

So, if your users are complaining about hallucinations and you need a cost-effective solution, RAG can let you hook up a trusted knowledge base into your LLM application. Let’s see how it works.

How does RAG work?

RAG sidesteps context window limits by selectively retrieving information relevant to the user’s query and augmenting the LLM with that context. Your knowledge base can be arbitrarily large and something you continually update. The LLM only ever accesses a subset of your proprietary data as needed, which also makes it easy to trace how the LLM generated its output.

Implementing RAG usually happens in two parts. First, you actually need to assemble your knowledge base in a format that’s optimized for retrieval queries. Then, for any given query you have to extract the right information to supply to the LLM.

LlamaIndex is one of the leading frameworks for RAG. They provide data connectors for ingesting data from a variety of sources like Google Docs or MongoDB. That data gets loaded into indexes, of which there are several types. The most popular is a vector store index, which organizes documents according to embedding representations.

At inference time, it’s extremely efficient to take the user’s input and retrieve the most similar documents (or really, subsets of documents called nodes). The most similar documents to the query are probably very similar to each other, too, so there are techniques for minimizing redundancy and selecting diverse sources to give the LLM.

That’s just a high-level overview of the RAG pipeline. You have a lot of options for tailoring RAG to your use case, as we’ll discuss next.

Customize RAG to meet your product requirements

A vanilla RAG implementation will only get you so far. Some of RAG’s shortcomings include:

- Latency: Retrieval adds an extra (often time-consuming) step before your LLM can generate its final output.

- Complex reasoning: RAG may provide your LLM additional context, but it doesn’t teach it how to reason about that context. You can’t just give an LLM some plan diagrams and expect it to turn into an architect.

- Output quality: You may recall this as a strength, not a weakness. Well, it depends. If your knowledge base is low quality or poorly organized, RAG will amplify that. Your LLM may also anchor on that data and restrain its creativity.

The key is understanding which of these issues affect your product and are having the biggest impact on users. In some cases, like if complex reasoning is what your model is missing, you should complement RAG with prompt engineering or model fine-tuning.

Even within RAG, however, there are plenty of advanced concepts that can help you squeeze out performance. For example, look into postprocessors for transforming data after retrieving it but before sending it to your LLM. Or try tuning various parameters like the amount of context you retrieve or the granularity at which you chunk documents into nodes for searching.

Before investing in advanced techniques, make sure your foundation is strong. If your index lacks the knowledge your LLM needs to serve your users, no amount of further tinkering will help. You have to first identify the key knowledge gaps and then assemble your dataset accordingly.

How you organize your data can make a large difference, too. For instance, if your data has a hierarchical structure, a tree index might be a better representation than a vector store index. Or consider a keyword table index if you want to match keywords in users’ queries to your documents.

Then there’s metadata. Rather than only using raw document data, metadata labels can help increase RAG’s accuracy and speed. This way, your retrieval algorithm doesn’t have to search over large volumes of noisy raw data. Instead, you’d search over the high-signal metadata and return the corresponding full-length documents.

For example, metadata about employee records may just list names, roles, demographic information, and a brief summary of the records associated with each employee. RAG would match user queries to the right person on the org chart and only then pull their records (which could be a couple of orders of magnitude larger than the metadata).

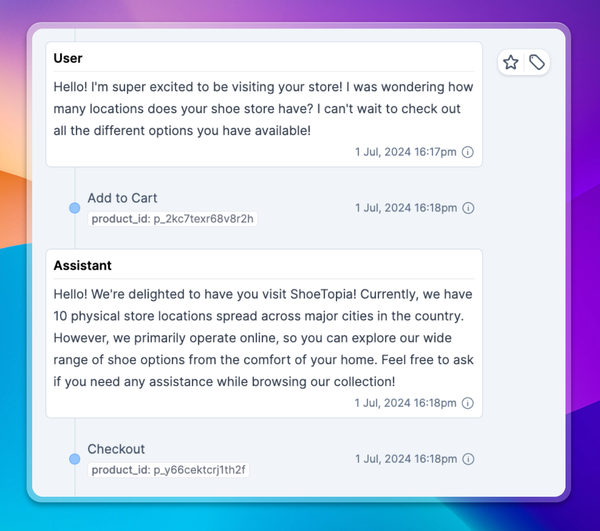

Understand your users with Context.ai to calibrate your RAG pipeline

Keeping a pulse on your users is core to deciding both how to customize your RAG system and whether RAG is the right tool for the job in the first place. Look for patterns in how your product is performing and use those to curate and structure your knowledge base.

Context.ai gives you visibility into what your users are saying to your LLM, both at the level of individual transcripts and aggregate clusters. With those insights, you’ll have actionable ways to improve your product. To learn more, schedule a demo today.